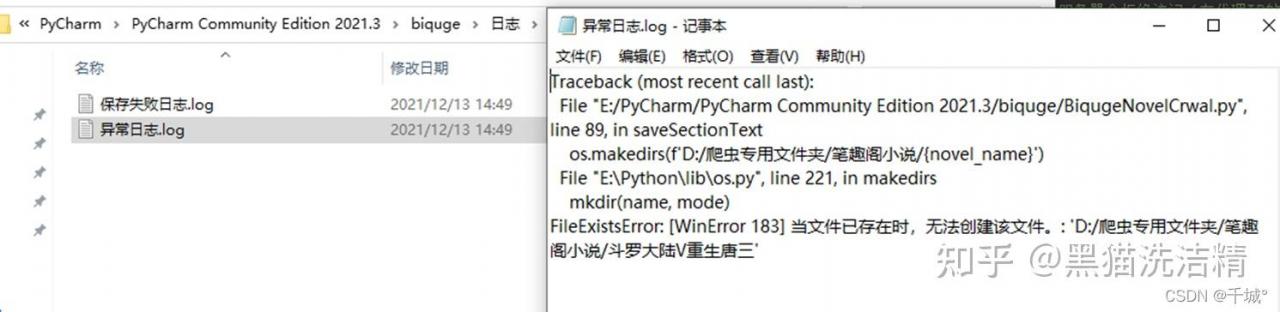

爬取小说解决乱码问题:

今天给同学爬了一篇小说:虽然没有反向爬取,但是爬取的内容还是乱码。

解决办法:获取到的文本编码不能是'gbk'或'utf-8'

响应 = requests.get(url, headers).text.encode('iso-8859-1')

源代码:

import requests

from lxml import etree

import time

headers = {

'user-agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/79.0.3945.88 Safari/537.36',

'Host': 'www.xbiquge.la',

'Referer': 'http://www.xbiquge.la/13/13959/',

}

url = "http://www.xbiquge.la/13/13959/"

response = requests.get(url, headers).text

# print(response)

res = etree.HTML(response)

hrefs_list = res.xpath('//dl//dd/a/@href')

# print(hrefs_list)

for href in hrefs_list:

url = "http://www.xbiquge.la"+href

print(url)

response = requests.get(url, headers).text.encode('iso-8859-1')

res = etree.HTML(response)

title = res.xpath('//div[@class="bookname"]/h1/text()')[0]

content = res.xpath('//div[@id="content"]//text()')

content_list = []

for text in content:

content_list.append(text)

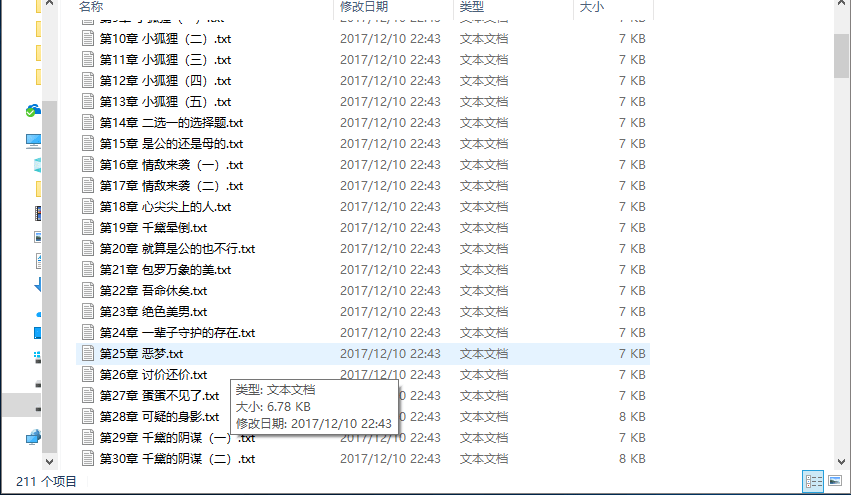

with open('./小说 圣墟/'+title + '.txt', 'w+', encoding='utf-8')as f:

content = ''.join(content_list)

print('正在保存' + title)

f.write(content)

time.sleep(0.3)

代码中没有反爬虫。 仅供新手看看。